Gender Digital Divide and AI Exclusion in India

Introduction

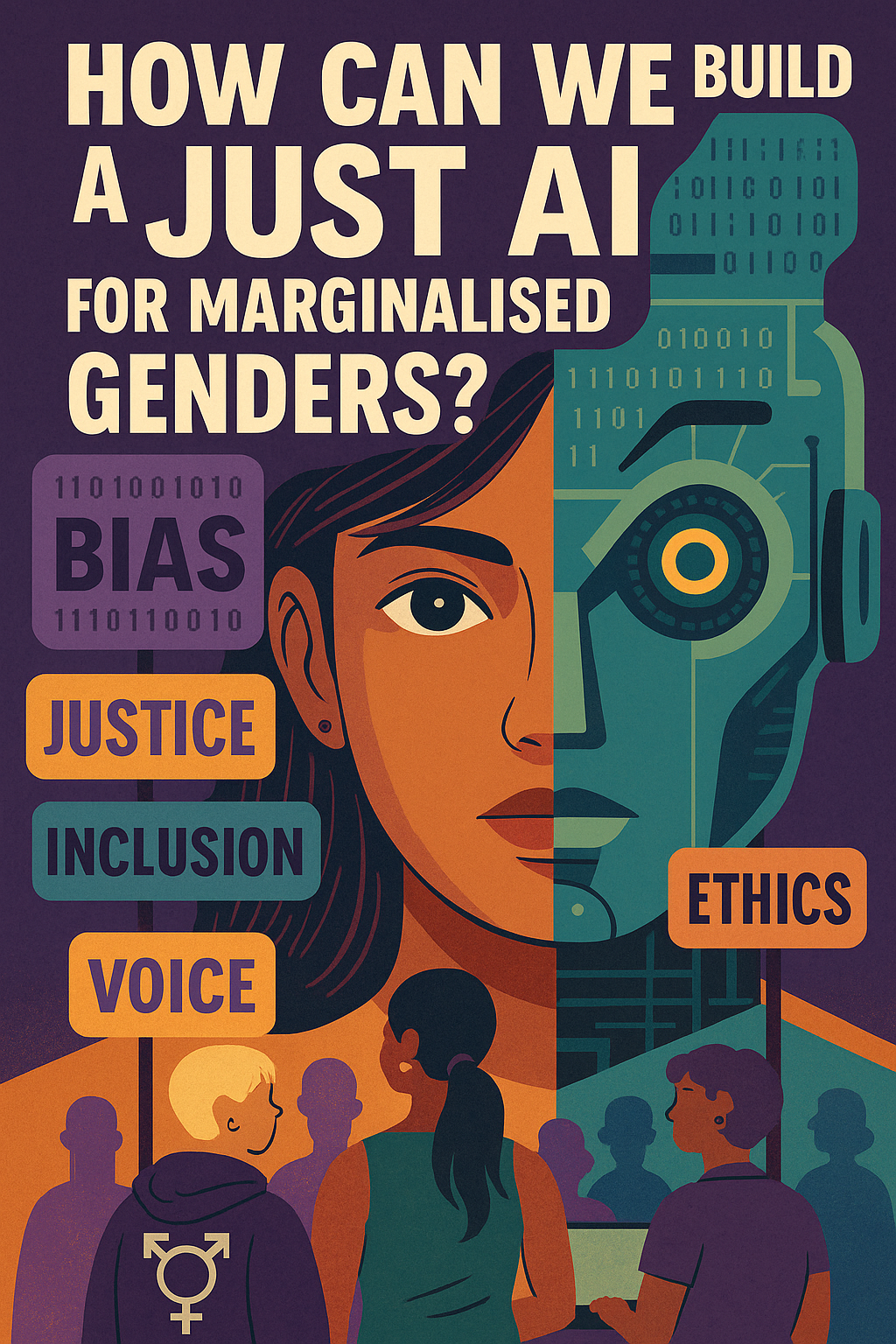

As AI shapes governance and society, its design often reflects existing inequalities rather than dismantling them. In India, the gender digital divide already limits access to technology, with women, transgender, and non-binary individuals facing systemic barriers. When AI systems reinforce these exclusions through biased hiring algorithms, predictive policing, and financial discrimination, they become tools of oppression rather than empowerment.

AI must recognize that subjectivity is crucial in understanding citizens and their aspirations. Lived experiences, socio-economic realities, and cultural contexts cannot be reduced to data points alone. To build a just AI, we must move beyond binary gender frameworks, challenge dominant narratives, and center the voices of marginalized communities. The question is not just about AI’s potential but who it serves and who it leaves behind.

Gender Digital Divide: Barriers to Access and Representation

The gender digital divide in India is a stark reality that restricts women’s and marginalized gender communities’ access to technology. Limited access to mobile phones, the internet, and digital literacy exacerbates existing social inequalities.

- Mobile Ownership Disparity: Only 31% of women in India own mobile phones, compared to 61% of men (Oxfam India, 2022).

- Internet Access: The GSMA Mobile Gender Gap Report 2022 highlights that South Asia (including India) has one of the widest gender gaps in mobile ownership and internet usage.

- NFHS-5 (2019-21): The National Family Health Survey found that only 54% of women in India had ever used the internet, compared to 72% of men, indicating a significant digital divide.

- Transgender and Digital Exclusion: Transgender individuals face even greater digital exclusion due to societal stigma and lack of financial resources (CIGI, 2021).

Caste, Religion, and Digital Exclusion: Structural Disparities in AI Access

Marginalized caste and religious communities face systemic exclusion from digital technologies. Socio-economic status, literacy rates, and infrastructural gaps further limit access.

- Scheduled Tribes (STs) are the least likely to afford digital access, followed by Scheduled Castes (SCs) (MGES Journal, 2023).

- Religious Divide: Muslims in India are 31% less likely to afford digital technologies than Hindus, while Sikhs and Christians are significantly more likely (MGES Journal, 2023).

- Urban-Rural Divide: Rural residents are 2.9 times less likely to afford digital technologies than urban dwellers (MGES Journal, 2023).

How Can We Build a Just AI for Marginalized Genders?

The World Bank & ITU reports have documented the gender gap in digital access in India, yet discussions on AI development often overlook intersectional barriers.

- Binary Gender Frameworks: AI systems in India are embedded in hegemonic, binary gender frameworks, largely addressing disparities between cisgender men and women, neglecting the unique challenges faced by non-binary, transgender, Dalit, Adivasi, Muslim, and disabled communities.

- Bias in AI Systems: Urban, upper-caste, and corporate-driven AI narratives replicate historical biases, rather than dismantling them. This manifests in:

- Predictive policing disproportionately targeting Dalit and Muslim communities.

- Hiring algorithms that downgrade applications from women and transgender individuals.

- AI-driven financial systems that reinforce exclusionary lending practices.

In such cases, AI ceases to be a neutral tool and instead becomes an instrument of systemic oppression. For a truly inclusive digital future, AI governance must address intersectional inequalities and prioritize marginalized voices in policy and technology development.

Conclusion

At Just AI Division: Data & Algorithms for Communities, we are asking: How can we build a just AI for marginalized genders?

AI development in India remains deeply embedded in hegemonic, binary frameworks of gender and exclusionary digital ecosystems. Existing discussions on the gender digital divide often reduce the issue to disparities between cisgender men and women, failing to account for the intersectional challenges faced by non-binary, transgender, Dalit, Adivasi, Muslim, and disabled communities. This omission perpetuates structural inequalities rather than dismantling them.

Furthermore, the narrow definitions of AI inclusivity, often dictated by urban, upper-caste, and corporate-driven perspectives, contribute to the replication of historical biases within algorithmic systems. These biases manifest in multiple forms, including predictive policing that disproportionately targets Dalit and Muslim communities, hiring algorithms that systematically downgrade applications from women and transgender individuals, and AI-driven financial systems that reinforce exclusionary lending practices. In such cases, AI ceases to be a neutral technological tool and instead becomes an instrument of systemic oppression.

For AI to truly serve marginalized communities, it must be designed with intersectionality in mind, ensuring that all voices especially those from historically excluded groups are heard. AI governance must prioritize inclusive policies, ethical data practices, and equitable digital access to dismantle barriers and build a just, fair, and representative technological future.